Tool Used

Personal Learnings

Throughout this project I gained experience in developing a full-stack application, and managing its source hosted on Github using Git.

I learned how image processing is a crucial step before any computer vision task, as adjusting for brightness clarity and providing markup before classification yeilds much better results.

Training an AI model using Tensorflow is quite delightful, once the model actually works that is... Before that, lots and lots of retraining is required. Although I did not delve deep into tuning hyperparameters, I got an overview of the AI project cycle.

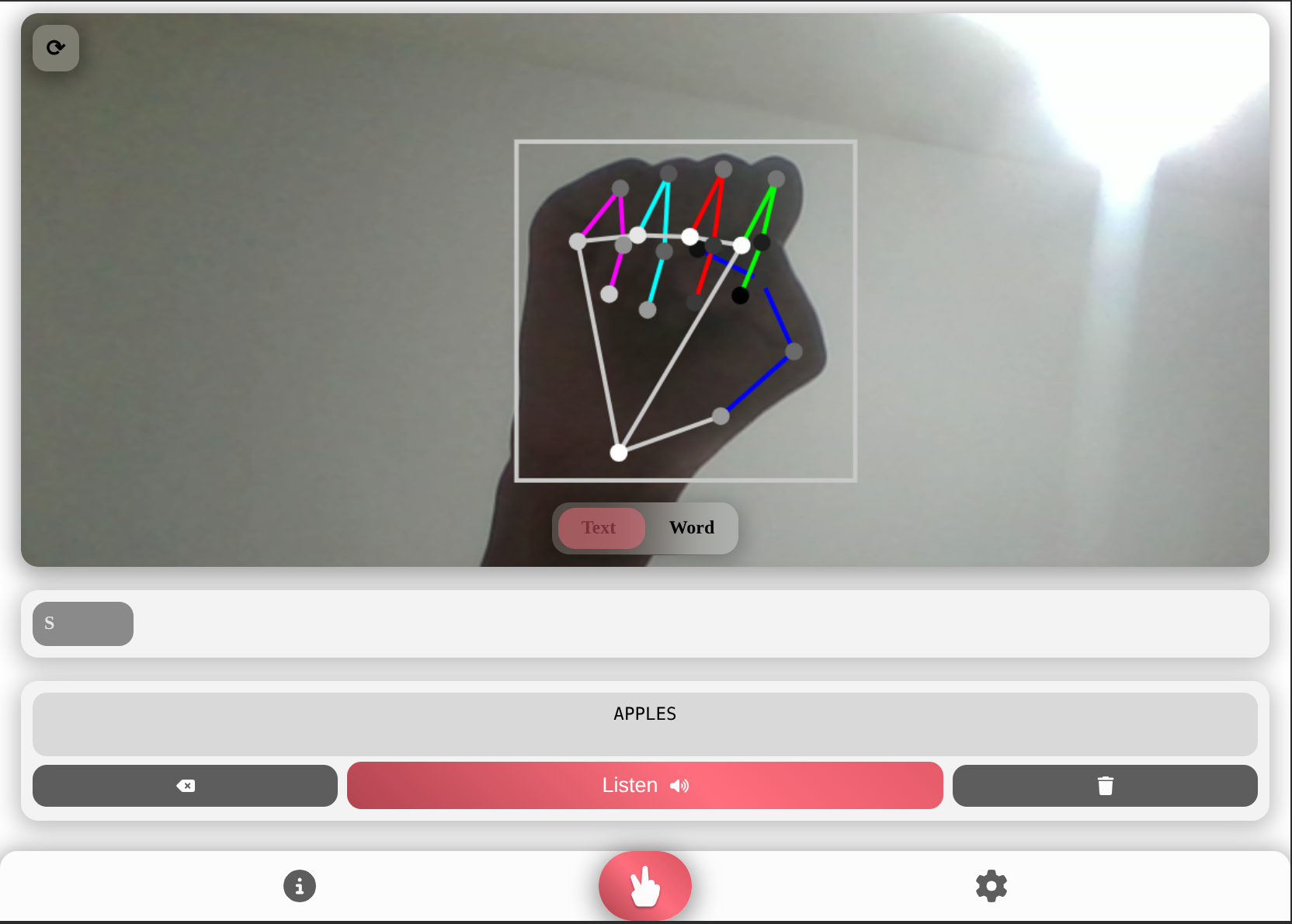

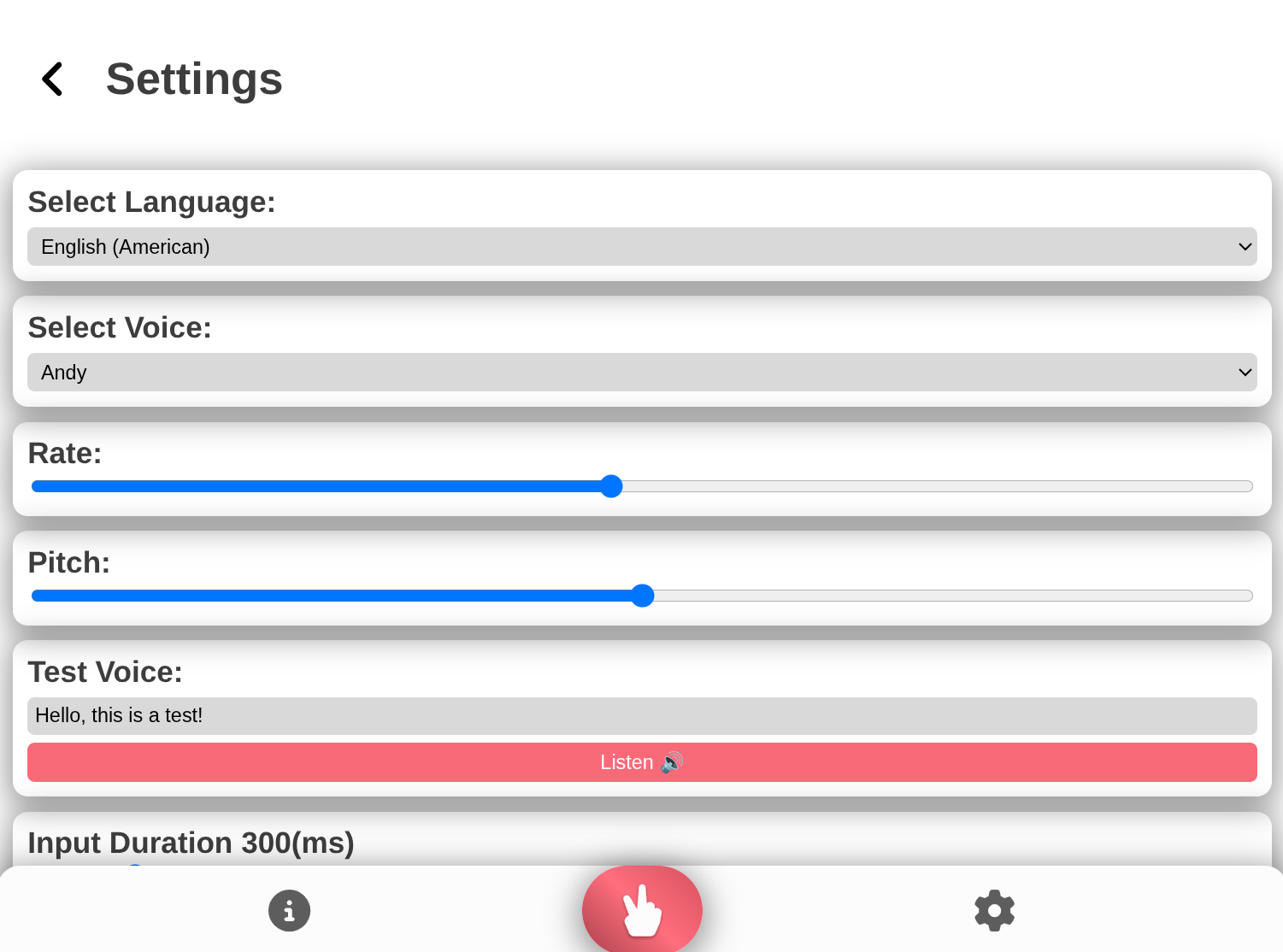

On the frontend side, I explored many layouts and workflows until I arrived at the right one, then I had to style the app consistently and make it responsive.

Finally, I believe the most important lesson I learned here was that it's acceptable to pivot in approaches, as I was stuck for quite some time trying to develop the whole app in Python, before taking a look at alternatives like AndroidJS.

Next Steps

Although I am quite proud of how this project turned out, there are many places to improve...

Firstly, I'll be the first to admit the code is a mess and if I were to return to it, I would be quite lost. Hence, I really need to start adopting consistent coding pracices like naming conventions, and commenting.

Additionally, I wish to try out more types of models. Currently, MediaPipe is not used directly, but rather as an intermediate. I would like to experiment with a model that predicts solely based on the MediaPipe mesh as that might reduce complexity, although making feature extraction during data collection a bit challenging.